PwC’s 2026 CEO Survey lands with a thud: CEO confidence in near-term growth has fallen, and most leaders still can’t point to meaningful financial upside from AI.

That doesn’t mean AI is “failing.” It means many companies are deploying it in a way that can’t possibly move the needle.

Because AI doesn’t create value just by existing.

It creates value when it is embedded into the workflows that run the business.

And right now, the survey hints at the core problem: only a relatively small share of CEOs say AI is being applied “to a large or very large extent” across core activities (for example, 22% in demand generation and 19% in products/services/experiences).

So the real question becomes:

What’s stopping AI from escaping the pilot zone and becoming operational leverage?

Reason #1: Off-the-shelf AI rarely integrates deeply enough to matter

Most “AI adoption” in the market is horizontal tooling:

chatbots, copilots, meeting summaries, generic agents, plug-ins.

Useful? Sometimes.

Transformational? Not usually.

Why? Because generic AI sits beside the business, instead of inside it.

If AI can’t reliably:

- read the right internal data at the right time,

- write back into the systems of record,

- trigger the next step in the workflow,

- and do it with security, auditability, and uptime…

…it becomes an accessory, not an engine.

That’s why companies report activity without impact:

lots of experimentation, not much operational compounding.

PwC basically says this in corporate language: isolated, tactical projects don’t reliably produce measurable value; enterprise-scale deployment aligned to strategy does.

What it looks like in practice

- A sales team uses an AI writing tool… but the CRM fields stay messy, follow-up timing doesn’t change, lead scoring doesn’t improve, and conversion stays flat.

- Support adds a chatbot… but it can’t see warranty status, order history, or policy exceptions, so it deflects little and irritates customers a lot.

- Finance runs forecasting models… but the data pipeline is brittle, and nobody trusts the outputs enough to act.

In each case, AI isn’t the bottleneck.

Integration is.

Reason #2: Static systems and slow vendors turn AI into a feature—when it needs to be a capability

This reason is the quiet killer.

A lot of companies are trapped in a legacy operating model:

“Launch the system, then switch to marketing mode.”

That model worked when software changed slowly.

AI doesn’t.

AI value is iterative:

- models improve,

- workflows change,

- competitive baselines shift,

- customer expectations rise,

- and what was “advanced” six months ago becomes table stakes.

So when a business locks itself into a platform that can’t evolve quickly—because of vendor roadmap limits, technical debt, or contract lock-in—it doesn’t just miss AI upside.

It accumulates AI drag.

This is one reason PwC highlights a widening divide between companies piloting AI and companies scaling it with the right foundations.

The other big reasons many AI programs stall (even with budget)

1) The “pilot trap” and the absence of workflow redesign

If you drop AI into a broken process, you don’t get transformation.

You get faster chaos.

That’s why leaders at Davos have been emphasizing job redesign and training alongside tooling—because AI gains appear when the work is redesigned around it, not when the tool is merely added.

2) Data readiness is worse than most executives think

AI is only as useful as the data it can reliably access:

definitions, permissions, quality, lineage, freshness.

If “customer” has five meanings across five systems, your AI assistant will politely hallucinate around the gaps.

3) Measurement is vague, so value is unprovable

Many teams track adoption (“people used it”) instead of economics:

cycle time, conversion, cost-to-serve, churn, loss rates, error rates.

If you can’t measure it, you can’t scale it.

And CFOs eventually pull the plug.

4) Risk, security, and trust slow deployment

PwC also flags rising cyber risk and “trust concerns.”

Even when the model works, legal/security reviews often prevent deep integration—especially in regulated industries.

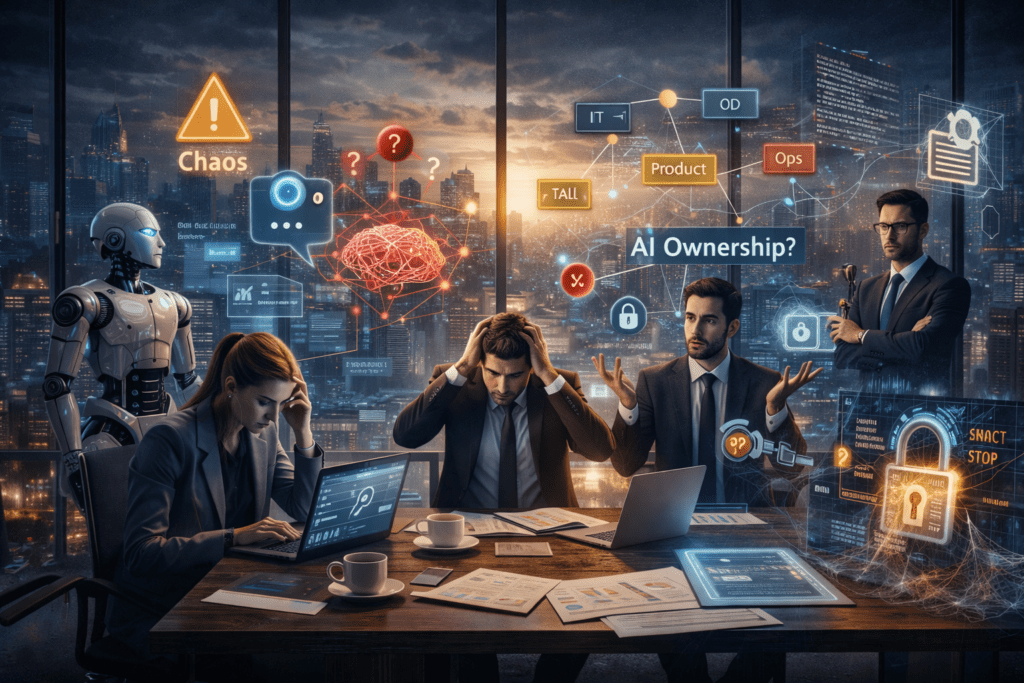

5) Organizational ownership is unclear

Who “owns” AI outcomes?

IT? Product? Ops? A COE?

When accountability is fuzzy, pilots multiply and impact evaporates.

McKinsey’s work on capturing AI value repeatedly points back to fundamentals like strategy, operating model, data, tech, talent, and adoption/scaling practices—not just model selection.

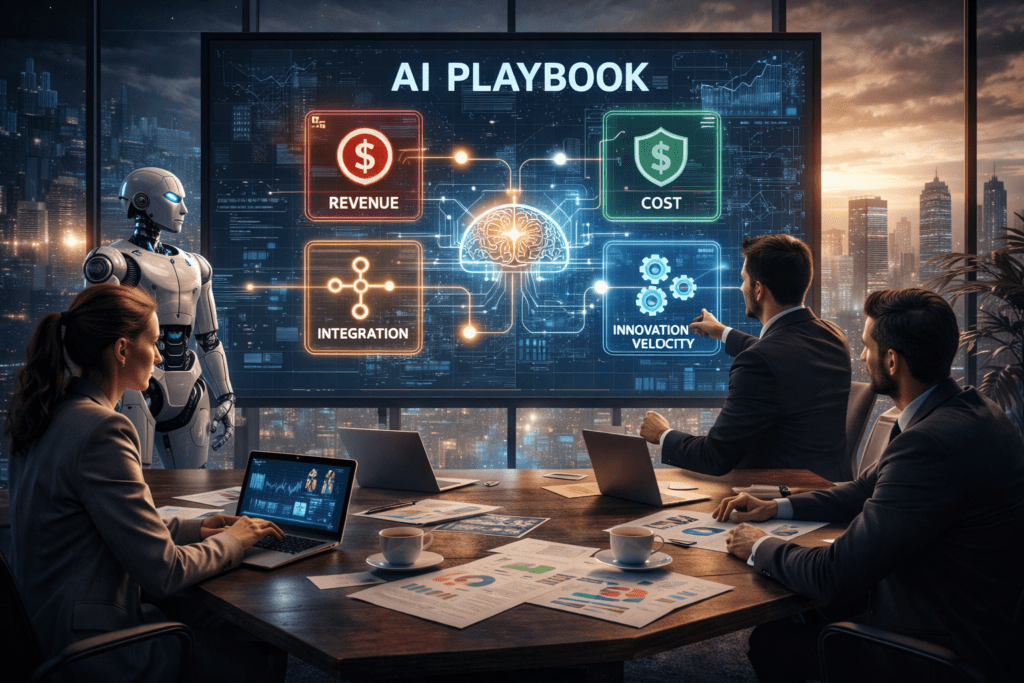

What to do instead: the “AI that pays” playbook

If you want AI to show up on the P&L, you need a different posture:

1) Start with one revenue workflow and one cost workflow

Pick two chains where time and error are expensive:

- revenue: inbound lead → qualify → propose → close → onboard

- cost: ticket triage → resolution → escalation → QA

Then embed AI at the choke points, end-to-end.

2) Make integration non-negotiable

AI must connect to systems of record:

CRM, ERP, ticketing, telephony, inventory, billing, analytics.

If it can’t read/write safely, it can’t compound.

3) Build an “innovation velocity” contract with your vendors

If a vendor’s business model is “ship once, market forever,” you’re buying stagnation.

Demand:

- roadmap cadence,

- extensibility,

- data portability,

- model/provider flexibility,

- and clear exit paths.

4) Build foundations before you bet the company

PwC calls out the importance of foundations—tech environments that enable integration, roadmaps, responsible AI processes, and culture that enables adoption.

5) Treat AI like product development, not tool procurement

Tools are purchased.

Capabilities are built.

The winners run AI as a product discipline:

backlog, releases, telemetry, iteration, governance.

Bottom line

The survey’s message isn’t “AI is overrated.”

It’s “AI is uneven.”

Most companies are still in the stage where AI is something they try.

The companies pulling ahead are the ones making AI something they run—deeply integrated, continuously improved, and tightly attached to business workflows.

And if there’s one strategic mistake to avoid in 2026, it’s this:

getting locked into static systems while the world’s core capabilities are becoming dynamic.

AI Agents (That Actually Pay Off)

Most companies don’t fail with AI because they lack ambition.

They fail because they buy tools that don’t integrate—and they get trapped in systems that don’t evolve.

AI Agents is built for the opposite.

Deep workflow integration. Fast iteration. Measurable outcomes.

Now, instead of giving you a generic demo, we’ll build something that mirrors your reality.

Get a free, no-obligation custom AI walkthrough video

We’ll create a short video built around your workflows, your tools, and your goals—so you can see exactly where AI can drive revenue, cost reduction, and speed inside your operations.

✅ No sales pressure

✅ No generic “AI overview”

✅ No buzzwords—just real use cases mapped to your systems

In your custom video, you’ll see:

- Where AI plugs into your stack (CRM, support, email, docs, ops tools)

- Which workflows it can automate end-to-end (not just “assist”)

- How we measure ROI (cycle time, cost-to-serve, conversion, resolution speed)

- How we avoid vendor lock-in and static platforms with an “innovation velocity” approach

This is the fastest way to find out if AI can move your P&L—before you spend months in pilot purgatory.

We can only produce 7 custom videos per week, and spots fill quickly.

👉 Claim your free custom AI walkthrough video

Let Intellic Labs show you how to make AI integrate, iterate, and finally pay off.